When Workers Train Robots

with Smart Glasses

How to Train a Robot

“Humans perform a wide range of dexterous tasks in natural environments every day representing

an untapped, renewable source of rich, real-world data.”

When workers train robots

In a quiet but profound shift on factory floors and in warehouses, a new form of job training is beginning to show up. Ironically, it’s not how bosses, workers and fellow workers, or even the rest of us thought it would play out. It was supposed to be people on the production lines one day, and then not so suddenly thereafter, replaced by robots.

Technology has a nasty habit of upending lots of preconceived notions. This time around, it is workers targeted for replacement by robots who are playing a mega-role in making their replacement robots more comfortable and more confident at either making things or in pushing cargo around a warehouse. Workers are now being enlisted to train the very iron-collar substitutes who are arriving to replace them.

No future human-robot collaboration here. No having beers after work with these dudes! It’s more like a Vulcan mind meld: years of learned techniques about how to perform on the job will now be extracted from the human workers and melded into a robot’s performance skills. Once complete, it’s bye-bye humans. And here’s where the technology comes into play.

Skipping the middleman

Smart glasses, developed for more advanced adventures, will now be used to extract the how-tos of expert job performance from humans. That ultimately means robots that are more skillful, productive, and faster ROI producers beginning day one on the job.

Additionally, when you think about it, smart-glasses technology now puts at serious risk of going bye-bye things like simulation labs, teleoperation training, even laborious hard-coding or GenAI prompting.

The smart glasses are the conduits of extraction that capture the nuanced, first-person perspective of human labor, which now promises to revolutionize how robots learn.

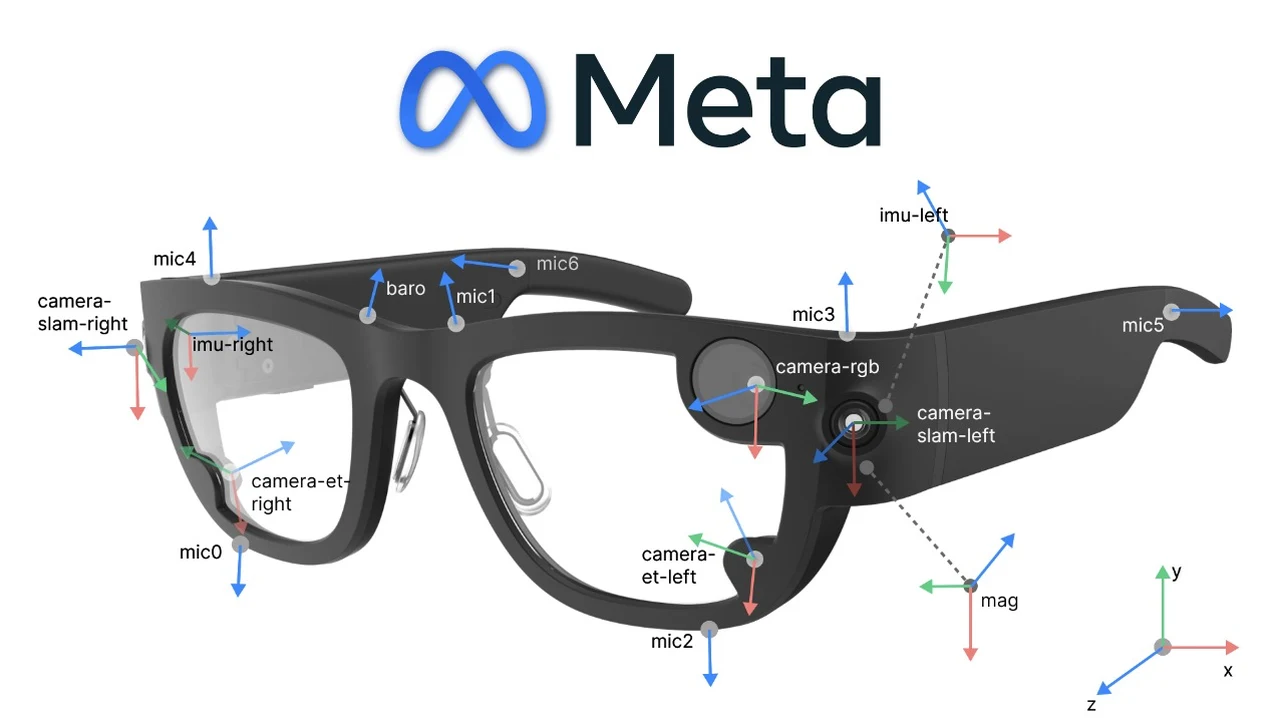

As with Meta’s Aria glasses and research projects like EgoMimic are showing, robots can learn directly from people in action. The results? Faster learning, smarter automation, and an entirely new way for humans and machines to collaborate. In fact, EgoMimic achieved a 400% increase in robot performance using just 90 minutes of Aria recordings.

Traditional robot training has long been a bottleneck. It typically involves two cumbersome paths: meticulous programming by expert engineers or hours of teleoperation, where a human manually guides a robot’s limbs to demonstrate a task. Both are slow, expensive, and lack scalability. More recently, simulation labs, like those pioneered by NVIDIA, have offered a powerful alternative. In these synthetic digital worlds, thousands of virtual robots can practice a task simultaneously, learning through immense trial and error without the risk of damaging physical hardware. This method is fantastic for learning general physics and motion but often struggles with the “reality gap”—the subtle differences between a perfect simulation and the messy, unpredictable real world.

As the researchers for EgoMimic write: “Despite recent progress in general-purpose robotics, robot policies still lag far behind basic human capabilities in the real world. Humans interact constantly with the physical world, yet this rich data resource remains largely untapped in robot learning. Humans perform a wide range of dexterous tasks in natural environments every day representing an untapped, renewable source of rich, real-world data.”

This is where the smart glasses approach delivers a quantum leap. AI-Powered Smart Glasses Revolutionize Robot Training & Automation. By outfitting human workers with devices like Meta’s Aria glasses, researchers can collect egocentric or “in-the-wild” demonstrations. As the worker goes about their normal duties—assembling a complex product, picking items from shelves, or operating machinery—the glasses record high-fidelity video, depth information, and inertial measurement unit (IMU) data. This creates a rich, first-person dataset of exactly how a task is performed by an expert: the glance towards a tool, the subtle grip on an object, the application of the right amount of force.

The EgoMimic project’s results are staggering. Their research demonstrated that a humanoid robot could achieve a 400% increase in performance after training on just 90 minutes of recorded data from these smart glasses. This method, termed “Robot Learning from Human-Occupied Environments” (RLHOE), requires zero changes to the workspace and no direct interaction with a robot during data collection. The human is simply doing their job, all the while generating the most valuable curriculum a robot could ask for.

Superior to simulation? A question of fidelity and scale

The natural question is how this data-centric, real-world method compares to the massive scale of NVIDIA’s simulation labs. The answer is not that one replaces the other, but that they are complementary forces addressing different parts of the problem.

NVIDIA’s approach excels at teaching robots the “how” of movement—the fundamental mechanics of walking, grasping, and manipulating objects within the laws of physics. It can run through millions of iterations in a day, refining basic motor skills to a high degree of proficiency. However, it often lacks the specific, contextual “what” and “why” of a particular real-world task in a specific factory.

A simulation might teach a robot to grip a wrench, but not the precise sequence and reasoning a seasoned mechanic uses to loosen a specific bolt on a specific engine.

The missing context

The smart glasses method provides that missing context. It captures the authentic, expert human process in its native environment, with all its idiosyncrasies. It teaches the robot not just to perform an action, but to perform it the way the best human does. The reality gap is eliminated because the training data is reality. The limitation is scale and diversity; you are confined to the tasks and environments you can physically record. The future of robotic training likely lies in a hybrid model: using massive simulation to build robust foundational skills and then using targeted, real-world egocentric data to “fine-tune” the robot for a specific, complex job, effectively closing the reality gap for good.

The digital twin “revolution” and massive savings

This methodology is not just about training individual robots; it is the key to unlocking truly dynamic and accurate digital twins. A digital twin is a virtual replica of a physical system, used for simulation, analysis, and control. Today, creating and updating these twins is often a manual, painstaking process. Smart glasses automate this.

As workers wearing glasses move through a facility, they are continuously capturing data that can be used to build and, crucially, update the digital twin in real-time.

If a production line is reconfigured or a new part is introduced, the human worker’s perspective through the glasses immediately reflects that change into the model. This creates a living, breathing digital twin that is always synchronized with its physical counterpart, making simulations more accurate and predictions more reliable. Rather than hindering digital twins, this human-collected data is the missing ingredient that will make them truly transformative.

The financial implications could well be enormous:

- Dramatically Reduced Training Time:Cutting robot programming and learning from weeks to days or even hours, as evidenced by EgoMimic’s 90-minute training period.

- Elimination of Downtime:No need to shut down a production line to program or train a robot on-site. Data collection happens during normal operations.

- Reduced Need for Highly-Specialized Robotics Engineers:The system leverages the expertise of the existing workforce, reducing dependency on scarce and expensive AI and robotics programmers.

- Faster Deployment and ROI:Companies can automate complex tasks much faster, seeing a return on their robotics investment in months rather than years.

Collectively, this could save companies tens of millions of dollars annually in large-scale manufacturing and logistics operations, fundamentally altering the cost-benefit analysis of automation.

The human cost: Faustian bargain

Beneath the breathtaking efficiency lies the profound ethical dilemma hinted at in the Instagram reel from China: this is human labor directly building the tool of its own obsolescence. There is an undeniable dystopian sheen to asking a worker to train their replacement, often without clear guarantees of retraining, reassignment, or a just transition.

Proponents argue that this simply continues the centuries-long trend of technology displacing certain jobs while creating new ones—in this case, perhaps more roles in robot supervision, data analysis, and system maintenance. They posit that it captures the valuable “tacit knowledge” of experienced workers before they retire, preserving their expertise indefinitely.

Yet, the moral burden on companies and policymakers is heavy. Implementing this technology must be paired with robust ethical frameworks: transparent communication with workers, significant investment in upskilling and reskilling programs, and potentially policies like shortened workweeks or profit-sharing models funded by the new efficiencies. The alternative—a world where workers are mere data points in their own elimination—is a socially corrosive path that the promises of efficiency and profit cannot justify.

The smart glasses on the factory floor are more than just sensors; they are a looking glass into our automated future. They reveal a path to unprecedented productivity, safer workplaces for dangerous tasks, and a seamless fusion of human intelligence and machine execution. But they also reflect a critical choice: will we use this powerful technology to elevate humanity or to simply replace it? The answer will define the next era of work.